Today I attended the Open World Forum conference here in Paris. Basically, it’s a business-oriented conference to do networking for folks in the free/open source industry. Some of the panelists were sometimes really boring, such as the “French Secretary of State responsible for the Digital Economy” who seemed to have deeply confused “free as in speech” and “open source” software — a grave mistake by my book. The panelist, who knew the difference of course, regularly overemphasised the use of “open source” software, but the notion of “free as in speech” was lost, and mentioned rarely, with the notable exception of the Red Hat folks. With the release of CryptoMiniSat, which I explicitly released under a free software licence, GPLv3, I of course disagreed.

Today I attended the Open World Forum conference here in Paris. Basically, it’s a business-oriented conference to do networking for folks in the free/open source industry. Some of the panelists were sometimes really boring, such as the “French Secretary of State responsible for the Digital Economy” who seemed to have deeply confused “free as in speech” and “open source” software — a grave mistake by my book. The panelist, who knew the difference of course, regularly overemphasised the use of “open source” software, but the notion of “free as in speech” was lost, and mentioned rarely, with the notable exception of the Red Hat folks. With the release of CryptoMiniSat, which I explicitly released under a free software licence, GPLv3, I of course disagreed.

The highlight of the conference for me was meeting the current Debian Leader, Stefano Zacchiroli, and researcher Roberto Di Cosmo. I have been using Debian for a very long time, and I always wanted to contribute. However, the best way to contribute is always with your expertise, which for me is SAT solvers. So, I approached Stefano with the idea of configuration management in Debian (dpkg), for which CryptoMiniSat would be a good fit, I think: complex package dependencies could be resolved with ease using CryptoMiniSat. If included in dpkg, CryptoMiniSat could take the prize of the most deployed SAT solver away from SAT4J, which currently holds this title due to its inclusion in the Eclipse development package. Fingers crossed… and lots of work is ahead.

Documenting code is not always so much fun. However, in order for the code to be extended by others, documentation needs to exist. Since I conceived

Documenting code is not always so much fun. However, in order for the code to be extended by others, documentation needs to exist. Since I conceived

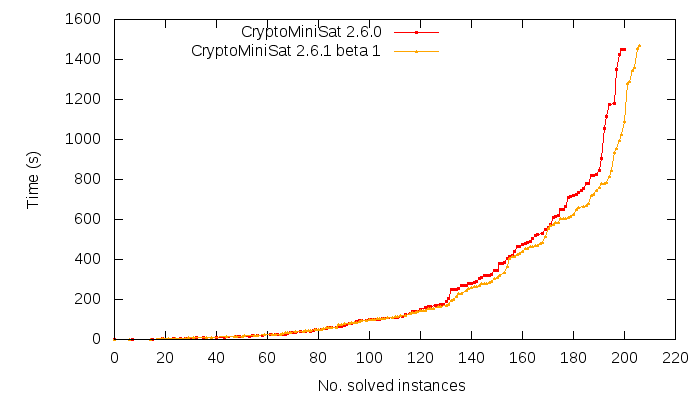

There is an

There is an